What is HDR? Television picture quality has vastly improved over the past few years due to two major broadcast technology advances – 4K UHD resolutions and HDR (high dynamic range). While 4K TVs are able to display more pixels, HDR can offer better pixels with a much wider range of colors and brightness levels.

Traditional SDR (standard dynamic range) video can display up to 16 million colors using the industry-standard BT.709 color gamut for 8-bit screens, originally designed for cathode ray tube televisions. Newer screens can now support much wider 10-bit color spaces including BT.2020 for SDR and BT.2100 for HDR, capable of displaying over 1 billion colors.

HDR not only offers a much greater range of colors in 10-bit but also provides more information on brightness levels, from 100 nits (the units used to measure brightness) for SDR to up to 10,000 nits for HDR. Most of today’s HDR-compatible TV screens can support just over 1000 nits, which is enough to provide a really good HDR viewing experience with greater contrast between dark and light pixels.

HDR content is already being offered by major streaming platforms as it provides more realism and depth for a cinematic viewing experience. When it comes to live events, especially sports, HDR really brings the action to life with vibrant contrasts between the field, the players, fans, and the outdoor scenery. Though HDR is often included with newer 4K UHD content, there is no reason that HDR can’t also be applied to HD content as well.

What Are the Different Types of HDR?

Most large screen TVs on the market today will support at least one consumer HDR format including HDR10, HDR10+, Dolby Vision, and HLG. All of these, with the exception of HLG, require the addition of metadata to enable a television set to correctly display HDR colors and brightness levels. The main difference between HDR10 and HDR10+ is that the latter includes dynamic metadata that can change the HDR settings per scene, whereas the former includes static HDR information for the entire program. Dolby Vision, a proprietary standard, also supports dynamic metadata.

HLG (hybrid log-gamma) or ARIB STD-B67, the result of a joint development between the BBC and NHK, does not require any additional metadata. HLG instead relies on its unique transfer function (a mathematical equation used to correctly display video) that includes an adapted signal curve supporting a higher dynamic range than SDR. Although HLG does not provide the same level of dynamic range as the other HDR formats, it has the advantage of being completely backward-compatible with SDR displays as they can simply ignore the higher dynamic range information.

The other HDR formats are based on the perceptual quantizer transfer function also referred to as PQ or SMPTE ST 2084. On its own, PQ video can’t be viewed on a normal television screen as it needs additional metadata for one of the consumer formats, HDR10, HDR10+, or Dolby Vision. Although none of these HDR formats are backward-compatible with SDR displays, they generally offer better HDR outcomes than HLG, depending on the content.

Contributing Live Broadcast Content in HDR

All professional television and video cameras support the SDR transfer function for 8-bit color. Newer came

ras will also support one if not both HDR transfer functions, PQ and HLG. In order to contribute live content in HDR, the camera itself needs to be set to the correct HDR format, HLG or PQ. Some will even capture video in both SDR and HDR simultaneously.

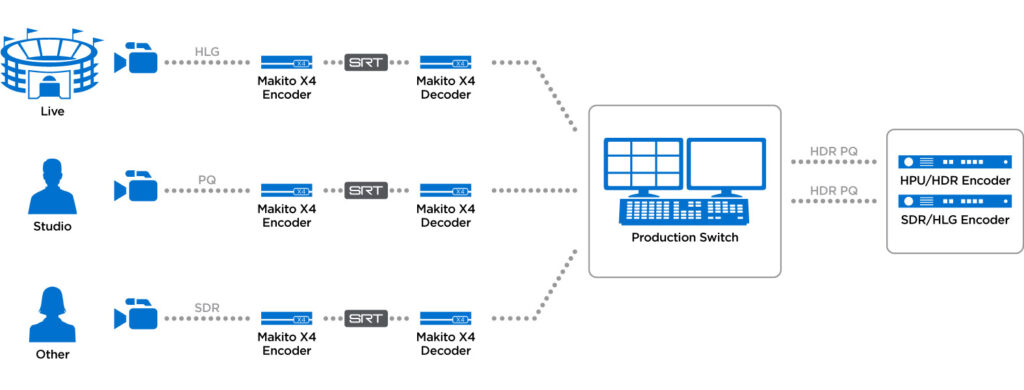

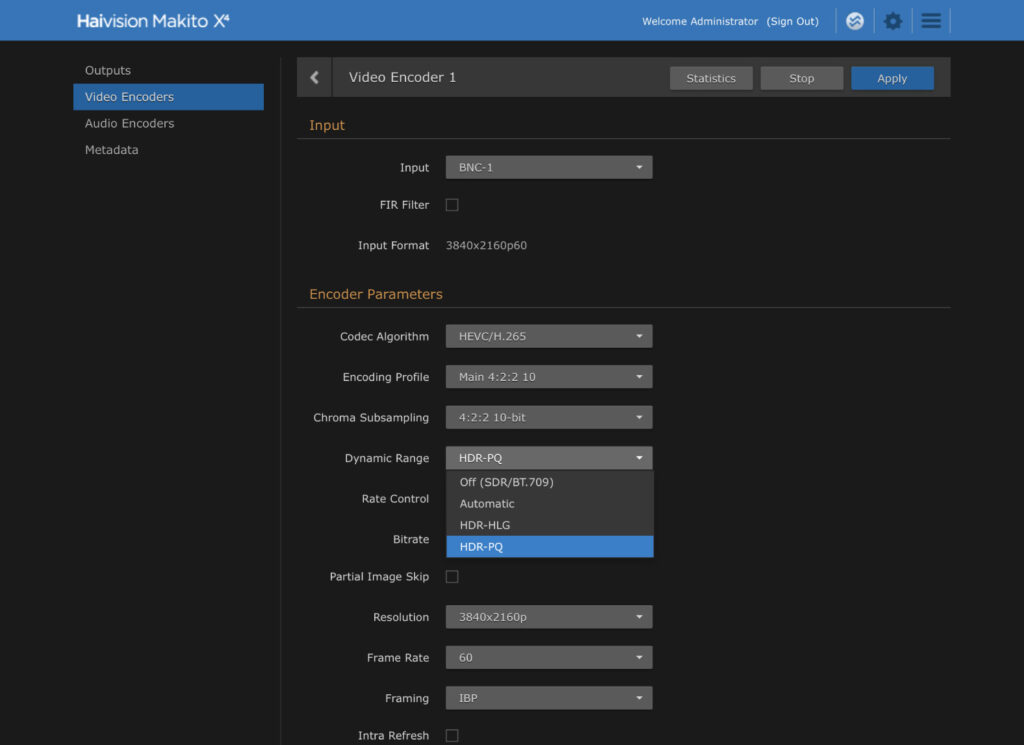

If the live event is happening from a remote location, the HDR camera will also need a video encoder, such as the Makito X4, that can encode in HEVC or H.264 with the correct transfer function included in the outgoing stream. Back at the production facility, an HDR decoder will also need to be able to decode the HDR video content with the PQ or HLG transfer function.

Once the HDR contribution video has been included in the live production workflow using an HDR-compatible video switcher amongst other equipment, the final step is to add the metadata needed for HDR10, HDR10+, or Dolby Vision using an HDR encoder or video processor. In some cases, the same PQ content can be used for two HDR formats simultaneously. For HLG content, no extra metadata is needed.

Encoding in HDR with the Makito X4

Encoding in HDR with the Makito X4

In summary, there are several types of HDR formats for consumers, depending on the type of screen or service that is being used. With backward compatibility in mind, some broadcasters may choose to offer on-air content in HLG while streaming in another HDR format giving online viewing devices the option of switching to an SDR stream. No matter what the exact workflow is when it comes to broadcasting in HDR it’s important to ensure that your camera and video encoder are both capable of capturing in HLG, PQ, or both.

Encode and Decode Video in HDR

Learn how the Makito X4 video encoder and decoder pair support ultra-low latency HDR workflows.